Almost nothing will go untouched by artificial intelligence (AI) in healthcare and surgery, notably in the quickly evolving world of vascular and endovascular surgery, Elsie Gyang Ross, MD, told the American College of Surgeons (ACS) Clinical Congress 2020 (Oct. 3–7).

The assistant professor of vascular surgery and research scientist with a focus on machine learning at Stanford University Medical Center in Stanford, California, was delivering a presentation on the current state of surgical AI during which she outlined the solutions either currently available or on the horizon pre-, intra- and postoperatively.

Ross ran through applications in radiology, pathology and vascular surgery; across intraoperative imaging and bleeding, robotic surgery, and postoperative acute care, as well as palliative care.

Though many solutions remain unavailable, she said, the incorporation of AI into healthcare is accelerating.

“Currently, there are limited applications of AI for the surgical patient when we’re looking purely at clinically available commercial solutions,” Ross told the ACS Clinical Congress. “[But] many new applications are on the horizon, making this a very exciting time in the development phase of surgical AI.”

Ross defines AI as the application of mathematical algorithms toward automated problem solving. “You can call the algorithms any number of things, such as learning algorithms—as a matter of fact a logistic regression can be applied toward automated problem solving, and this system can be considered AI as well—but the idea is you’re using some mathematical tool to automate a decision or process.”

In healthcare, that can mean minimal to no automation, partial automation such as assistive surgery, and fully automated surgery. Machine learning algorithms put to work include supervised learning, unsupervised learning and deep learning, Ross explained.

At present, the field is in the process of figuring out “how to augment the abilities of humans who are doing the day-to-day work of taking care of patients,” she said.

Radiology has seen the most progress in use of AI, Ross went on. She gave the example of a collaboration between the University of California, San Francisco (UCSF), and GE Healthcare to address lags in x-ray reads during critical time windows as a case in point. They developed AI software that can “identify pneumothorax on a chest x-ray and alert the technologists and radiologists, and bring the x-ray up the queue so that it’s read much quicker than it normally would be.” This solution gained Food and Drug Administration (FDA) clearance in 2019, she added.

“The field of vascular surgery and the endovascular repair of abdominal aortic aneurysms has been quickly evolving. As we’ve been able to fix more complex aneurysms, we’ve needed intra-operative assistance for the proper placement of stent grafts,” Ross continued.

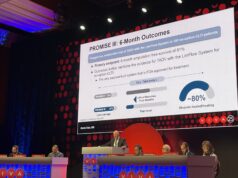

One example includes a Cydar Medical AI solution to model how vessels move and are deflected by the introduction of different wires and instruments, she said. “Preliminarily, use of this technology has been found to decrease important metrics for vascular surgeons, such as radiation exposure, fluoroscopy time and procedure times.”

Elsewhere, in the sphere of intraoperative bleeding AI technology has been employed to quantify blood loss based on the soilage from lap pads. “And at least in the obstetrics literature, this solution has been preliminarily shown to improve time to identifying hemorrhage, leading to a reduction in delays in intervention,” Ross told the ACS gathering.

“And while we can talk all day about robotic surgery and AI,” she said, “I want to highlight that not only are AI solutions being developed for robots, but there is robotic hardware now being developed in synergy with AI software. In Microsure’s case, they have developed the world’s first microsurgery robot with software and hardware that can stabilize a surgeon’s movements to allow for more precision across a broader spectrum of a surgeon’s skill. In recent reports, this technology platform has been used to successfully perform lymphatic surgery.”

Applications in postoperative acute care is another important area where AI can lead to improved patient outcomes once solutions become commercially available, Ross continued. “Though computer vision is often discussed for intraoperative possibilities, recent work shows that computer vision will play a big role in postoperative care as well.”

Researchers at Stanford demonstrated that depth cameras placed in intensive care units (ICUs) were able to accurately quantify patient mobility in the ICU. “That can be extremely important in recovery,” Ross continued. “Their AI model could also decently identify how many personnel were required to enable such patient movement. We currently have multiple more cameras going up in our ICUs that will use depth and heat mapping amongst other capabilities to monitor ICU care and recovery.”

Lastly, Ross touched on AI and palliative care. “As surgeons, we’d love it if we could save everyone, but, in reality, we do have to make difficult decisions about individual patients,” she said. “We’re so close to these decisions sometimes, it can be hard to see the patient holistically, or in busy practices it can be difficult to make these assessments as well. Even though it sounds counterintuitive, computational algorithms can help. Applying deep-learning algorithms to the task of identifying which patients will die within three to 12 months can provide pretty accurate results. We currently have such a model running here at Stanford, which helps our palliative care teams prioritize patients to see and consult once they have permission from treatment teams. This all to say nearly nothing will go untouched in the AI world in the future.”

For those perhaps wary of a complete machine takeover, meanwhile, Ross had some words of comfort. “If you take nothing else away from this talk, based off the current state of AI, surgeons still have great job security,” she quipped as she concluded.